https://x.com/asadkhaliq/status/2019769887087046986 Asad Khaliq @asadkhaliq

Asad Khaliq @asadkhaliq(essay) Life At The Edge

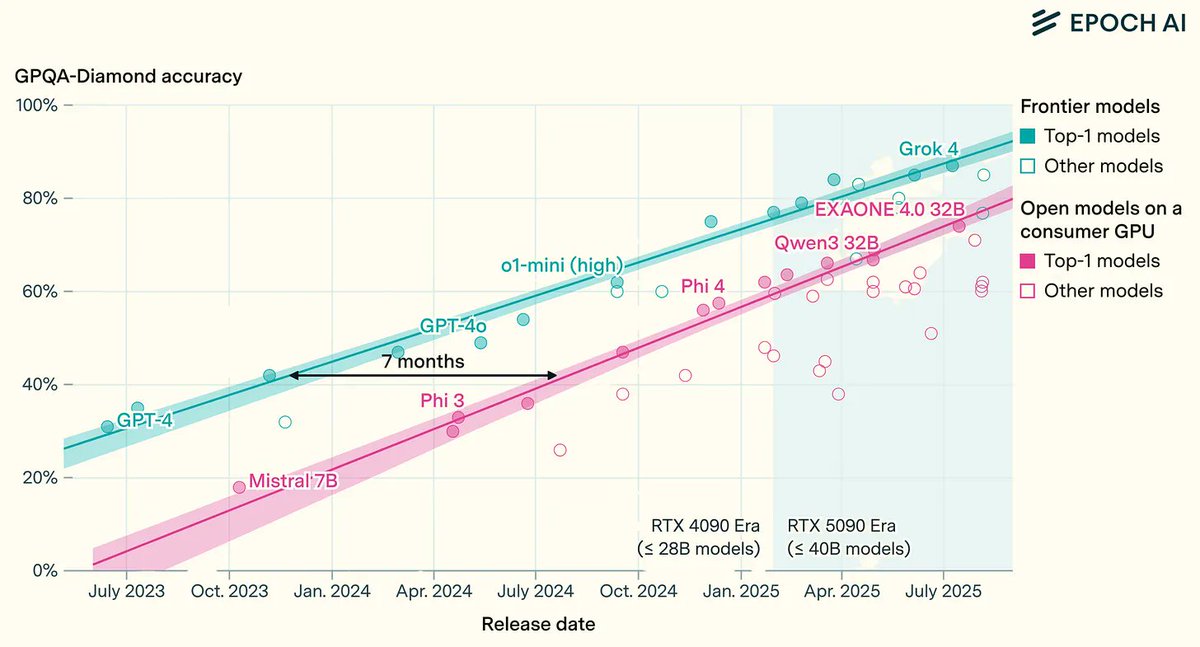

"Local AI" today is mostly about giving models OS-level access so that more files and context can be transferred to the cloud for inference. But intelligence is about to diffuse to the edge just as computing did in the 80s and 90s

Some thoughts on rent vs own for inference, Apple events becoming great again, God models, and the coming dance of edge and cloudFeb 6, 2026 View on X →

Friday, February 6, 2026 AI